Ethical Artistry: Changing our Approach & Evaluating our Efforts

This post is the third in a four-part series looking at concert curation and some of the larger ethical dilemmas we all face as artists as a result. If you want to jump back, Part 1 and Part 2 are here; Part 4 will follow next week.

Changing our Approach to Avoid the Easy Way Out

In Part 1 and Part 2 of this series, I argue that our musical world can be more rewarding and have its most substantial positive impacts when we present projects passionately and when we take a deeper look at our institutions, decision-making, and artistic processes.

Part 2 showed how simple issues can get complex pretty quickly, and that it can take real effort to confront ethical issues in a meaningful way. This “real effort” part can be a disincentive. Why spend extra time thinking through complex issues—especially if there is an easy fix in front of us? It’s often simpler to keep moving through our work and busy lives by avoiding complex questions, rather than taking time to confront them and come up with nuanced solutions.

Unfortunately, it is this “easy way out” or “path of least resistance” or “quick fix” (whatever you want to call it) that can lead us into ethical gray areas. I’ve argued that by spending a little more time sorting through the complexities of both our decision-making and execution, we can make a real difference. And there’s even better news: if you start building this “extra” planning into the framework of your professional life, it can quickly become part of your normal routine, without adding any “extra” or undue burden.

Let’s consider an example. You want to put on a concert! You’re excited about it, and a little scared (because you haven’t done a lot of this before), but you’re really committed to making it happen! Many of us have been in this exact position. So, we launch into what is easy and familiar: we program pieces we already know, written by composers we’ve worked with before, and we team up with a local ensemble at a cheap venue. This is sort of a “simplest variables” version of your project, and it is coming from a genuinely good place in your heart!

[banneradvert]

Now, halfway through your project, you are already many emails in (with the ensemble, venue, music rental companies, and so on), when you get the following message from a colleague: “Hey! I got your project e-blast. Looks cool! I noticed all of the composers are alumni of School X. Did School X sponsor this concert?”

Hmm. You pause. This wasn’t something you had really considered. You were turning to music you knew and loved, and maybe it’s no surprise that the composers you picked were your classmates or friends from a certain school or geographic region. And, it isn’t necessarily a moral failure on your part, but you’ve clearly been a little narrow or had a bit of a blind spot that may have held back your programming. So, what can you do about it? At this point, halfway through, overhauling the project would be a major undertaking and would add a lot of extra work as you redo many steps.

Now, imagine a variation of your original concert and idea. We’re back at the beginning. You are still passionate and excited, and you’ll still start the process with pieces you know, by composers you’ve worked with. But…you want to make sure your program is as strong and vibrant as it can be. So you’ll also make sure to take a look at a few other pieces in their catalogs, and you’re going to ask for suggestions from colleagues for other composers outside of your circle. Spend some time listening there, too. Now it’s time to contact ensembles. You should still plan to reach out to the local group you’ve worked with before, but maybe there are one or two others you can consider as well. Have you emailed them before, or even checked out their websites and work? And what about venues? Can you expand your list? Maybe another great option is out there?

If you didn’t plan in these layers at the start, they become a major burden later in a project, but at the beginning, these “extra” steps aren’t really extra at all. In fact, if we build them into the project from the outset, they sort of seamlessly integrate into the overall planning process, and they can lead to a final result that is much more impactful! In this particular hypothetical example, some extra listening at the beginning might lead to a program that includes music of a few close friends, as well as others you didn’t know before who have similar artistic work.

Here’s the big takeaway: it can be hard to accommodate a lot of ethical nuance when we are in the thick of our busy professional routines and schedules. This is often where we run into dilemmas and we don’t have time to pursue anything other than the “easy way out.” But, if we can put in a little more planning at the start of our work, and tweak our general approach, our final work will likely have a more virtuous impact, without adding more burden into our routine.

Evaluating Our Efforts: The Broad View, Quotas & Metrics, and Qualitative Concerns

Many of us care about ethical artistry, but how do we measure our efforts? And how do we balance competing demands, if it feels like promoting one set of criteria will negatively detract from another? (We looked at some of these in Part 2—e.g. should I program more pieces but know that each will have less rehearsal time?) Every case can be a little different, but here are some general tenets and tools that can aid us in evaluating our work.

The Broad View

As I suggested in Part 1, it is particularly important that we take a broad view in assessing our work. Invariably, any single project you focus on will favor certain criteria/variables over others. For example, if you present a regional composer festival, you will automatically be promoting music of composers inside that region, and excluding music outside of it. There are pluses (promoting local artists; strengthening regional ties) and minuses (not giving audiences exposure to outside composers; excluding based on geography).

It seems to me that if this is the only artistic project you pursue regularly, the minuses stand out a bit. Do you have a bit of a blind spot? But, if we take the broad view, and we consider that you pursue many projects which promote living composers of diverse styles, demographics, geographies, and so on, then the potential “minuses” of your regional festival seem to melt away in terms of the larger picture of your artistic work. (This is true for any specific programming initiative like focusing on regional composers; focusing on a certain ethnic demographic; focusing on a certain aesthetic movement; etc.).

The broad view can also provide important context. In a real-world example, I attended two concerts a few months apart in 2017 featuring two different violinists. (I’ve tried to keep any living artist information anonymous.)

Program 1:

Violinist 1

Beethoven, Sonata No. 3

Franck, Sonata in A Maj

Anonymous Living Composer, World Premiere

Moszkowski, Suite in G Maj

Sarasate, Navarra

Program 2:

Violinist 2

J.S. Bach, Sonata No. 1 in G Minor

J.S. Bach, Partita No. 1 in B Minor

J.S. Bach, Sonata No. 3 in C Major

J.S. Bach, Partita No. 2 in D Minor

At first glance, neither of these programs seems especially adventurous. Are these projects that were curated with passion? Or are they run-of-the-mill violin recitals? (Maybe something in between?) The context of the broad view makes all the difference in this specific case.

Program 2 features four pieces all by the same composer (Bach), which are all in the same general style (dance suite) from the same era (Baroque). Program 1 at least has a bit more variety in that it features composers who are German, French, American, Russian, and Spanish, and it also features music of different eras (early-Romantic, late-Romantic, and the commission/premiere of a new work). Under a short-term view, Program 1 seems to have a bit more going on, since it features a living composer and a wider swath of musical material.

Yet, when taking a broad view, Program 2 was perhaps even more powerful and exciting for me to experience for a few reasons. Most importantly, the violinist performing Program 2 has dedicated a particularly large extent of his performing career to championing the music of living composers (this includes his founding of a major new music string quartet; his work as a core member of a major new music large ensemble; and his work to premiere numerous solo works). Second, the violinist performing Program 2 had worked with great conviction on this program so that it wasn’t “run of the mill.” The works were performed attacca, with thought and attention given to how one suite would elide into the next, so that the immediate transition provided a curious re-contextualization of the subsequent music. Further, the performer added contemporary techniques of ponticello bow position and harmonics in deliberate moments so as to inflect and change the intonation and color of Bach’s original score. The sum total of these curatorial decisions gave Bach’s music a peculiar freshness and challenged the listener to consider an interpretation divergent from the norm.

Thus, within the larger scope (“broad view”) of his work, Violinist 2 and Program 2 didn’t seem to be a conservative rendering of standard Bach works at all; instead, it was a refreshing approach to Baroque music from a performer whose specialization in contemporary music added new ideas and insight. Perhaps most importantly, the project revealed a passion, conviction, and thoughtfulness that resonated deeply with the audience. While Program 1 did showcase a living composer and did include thoughtful, deeply musical playing, it did less to provide a powerful curatorial experience, and more to highlight the performer’s attributes as a violinist. While neither of these programs was a statement on the diversity and talent of today’s living composers, the “broad view” provides especially important context as to the deeper artistic merit each program did or didn’t provide.

Quotas & Metrics: What They Do and Don’t Tell Us

Programming quotas and statistical metrics can be useful tools to quantify some of our artistic efforts. When we use them thoughtfully, they can help us gather meaningful information and guide our decisions; but, these statistics tools, by themselves, are not the whole story!

Imagine your ensemble is having an artistic planning meeting. You all agree you are committed to championing contemporary composers! How will this actually play out? Are there specific quantitative criteria you are considering?

Are you interested in:

- the total number of contemporary works you program in a season?

- the total minutes of music, regardless of how many total works?

- the specific percentage of contemporary works within your larger season?

- certain specific demographics (e.g. age, race, gender, nationality, etc.) of the composers?

- defining “contemporary” based on decade of composition or a composer’s age?

- only your own ensemble’s metrics, or how they fit within those of the larger field?

In these examples, hard statistics can be useful. If you’re coming off of a busy season where many decisions were made “off the cuff,” a statistical spreadsheet at season’s end may show where your programming tendencies lay: male vs. female composers; local vs. non-local artists; American vs. International composers; pre-2000’s vs. post-2000’s; etc. If something seems out of balance, can you pay more attention to it next season by planning ahead?

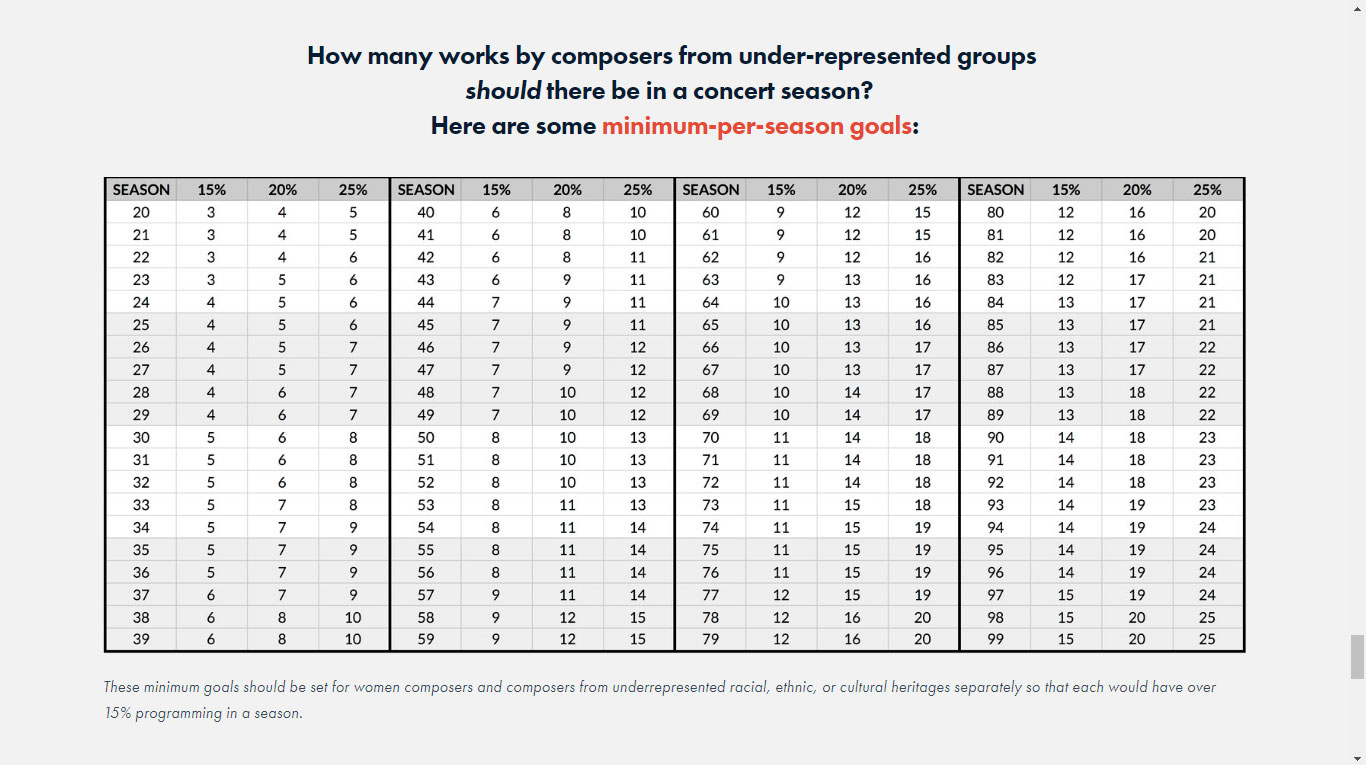

These statistics also illustrate how your programming stacks up within the field. The Institute for Composer Diversity has started keeping statistics on various U.S. orchestras, and they have provided a helpful chart (below) that shows how many works you need to program each season in order to meet a quota of 15% of works by under-represented composers.

Source: Institute for Composer Diversity

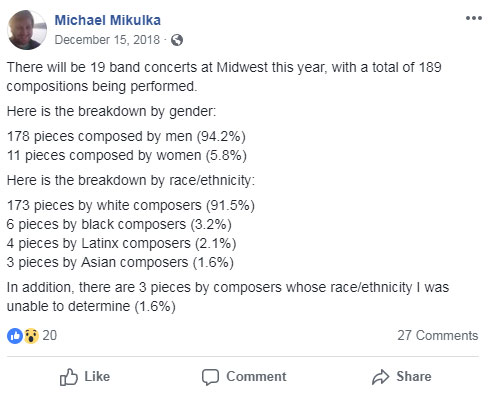

In past years, groups like the League of American Orchestras or the Baltimore Symphony have gathered hard data, in an effort to consider how our institutions are functioning. And, individual composers have started keeping tabs in areas where they are passionate. For example, composer Michael Mikulka publishes some statistics on Facebook each year of the repertoire programmed at the annual Midwest Clinic for band and wind ensemble music.

So what do we do with all of this information? How can it be a helpful tool? And, what other factors should we consider besides quantitative data?

Sometimes these sorts of clear statistics help keep us honest. Mike Mikulka’s data, for example, shows that at one of America’s major music conventions, an overwhelming majority of programmed music is by white male composers. His data doesn’t speak for every ensemble, director, or composer working in the field, but it does show an alarming demographic disparity at a major convening. (And his statistics have been consistently similar over multiple seasons.)

In this case, the data (“works by composers of demographic X”) seems pretty black and white, and the ethical implications are obvious: whole groups of composers are under-represented.

But, as much as this data tells us, it is, in itself, a statistical summary of choices already made, not an explicit moral compass that guides our decision-making process in the future. This distinction is vital if we want this data to help us. Otherwise, we may fall prey to taking rash action that doesn’t solve the underlying problems.

As we considered in Part 2, in a controversial programming debate involving a serious look at hard data, the Philadelphia Orchestra made a big quantitative shift in their season: going from 0 works by female composers to 9 works. This was an undeniably positive step in a quantitative category, but it didn’t fully address other larger ethical considerations, such as “quality of opportunity” for younger composers (male and female) to have access to orchestras. In this case, and others like it, statistical data and quotas (e.g. programing Y works, by demographic X in a single season) only partially satisfy our ethical ambitions.

This illustrates two important limitations about quotas and statistical metrics:

- They often emphasize quantitative thinking, not qualitative thinking.

- They require you to choose what categories you measure and value most.

If we have a clear vision of what ideals we hope to promote in our artistic work, we have a better sense of which statistics will be useful to gather and use in evaluation. And, as will become clear, no single category or criteria will be sufficient for measuring the impact of our work.

Let’s look at the metric “total number of works” by contemporary composers. If this is the main statistic we use to evaluate our programming, a group like the New York Miniaturist Ensemble, which performed more than 300 contemporary pieces during their existence from 2004-2010, did a pretty amazing job and probably outperformed (in quantity) many other new music groups. (Be honest: how many works has your own group performed in six seasons?)

However, according to NYME’s mission and approach, all of the “performed music [was] composed of 100 notes or fewer.” In this case, the sole metric of “total number of works performed” isn’t telling us the whole story! NYME still did something interesting and important that supported composers, but the single statistical category of “total works performed” doesn’t meaningfully compare their efforts to those of other groups.

This is obviously an extreme example, but there are many others where quantitative metrics only tell part of the story. For example, if the Nashville Symphony features four composers in their Composer Lab every two years, and each composer gets roughly equal rehearsal time, how does their approach compare to a group like the American Composers Orchestra whose Underwoood Readings often feature five or six composers every year, but who may not get quite as much rehearsal time since it is being divided amongst more participants? In this case, we have competing metrics: “most composers performed” vs. “most rehearsal time” for individual pieces.

What about a concert experience like the Riot Ensemble’s performance of a single 70-minute work (Solstices by Georg Fredrich Haas, exploring light and darkness) relative to Contemporaneous’s “Orbit” (a program featuring five works also exploring sound and light)? Here, data from any single metric (“total works featured” vs. “total amount of music” vs. “amount of rehearsal time for a single piece”) would tell a very different story.

The truth is, in all of these cases, groups are working hard to give living composers a voice. The point is not to praise one group over another based on hard statistics, but to point out that no single metric can tell us the whole story about the impact of our artistry! In fact, these examples serve to illustrate that quantifiable data is only part of the story. If our programming is oriented solely around meeting numerical quotas, we can easily lose sight of other considerations.

Quantitative vs. Qualitative Concerns

While I do advocate using statistics to help quantify and compare our artistic efforts with those of the larger field, I caution us to use the data thoughtfully and to make sure we pay attention to other concerns that are more naturally evaluated with qualitative intuition, rather than hard data.

The issue of quantitative vs. qualitative impact is one of great complexity in ethical debates. Moral philosophers, economists, and political theorists have long scrutinized the merits of strict utilitarianism (which emphasizes helping the most people possible, or doing the most good possible) relative to other pluralist or egalitarian views (that ask us to consider the qualitative nature of the impact we are having, in addition to the total amount).

I wouldn’t stipulate that you should fall on one side of this debate versus another, but we should be cognizant of our work’s quantitative and qualitative impacts. If we are putting all of our efforts into a quality artistic experience, but overlooking hard data which points to our intuitive programming biases, we likely have a blind spot we could address. On the other hand, if we are checking off metric boxes in our programming, but presenting lackluster concerts that aren’t engaging others in vital ways, what is the deeper extent of our impact?

My biggest caution is that we not be too quick to pat ourselves on the back, just because we seem to be satisfying statistical data in our work. Sometimes this data distracts from other issues we are not addressing—like quality of artistic experience; quality of access to our work; and other factors that don’t easily show up in a pie chart. I argue in Part 1 that we have to be really passionate in our programming, and trust our intuition to pursue projects we deeply believe in. Sometimes a single concert experience we create with this passion can reach others in tremendously deep ways that are hard to quantify in hard data, but which are every bit as relevant, if not more so, than meeting a categorical quota.